Draft Documentation

This page is currently under development. Content may be incomplete or subject to change.

Makes extensive use of AI-generated documentation.

Automated Verification#

Automated verification enables SysGit to integrate with external test systems, simulation tools, and hardware-in-the-loop (HIL) environments to automatically update verification status based on actual test execution. This transforms verification from a manual, document-centric process into an automated, model-based workflow with complete traceability.

Overview#

SysGit's approach to automated verification leverages the Python object model (sysgit-py) to:

- Read verification case definitions from SysML v2 models

- Execute or interface with test systems

- Collect test results from external sources

- Update verification verdicts programmatically

- Commit changes back to Git for full audit trail

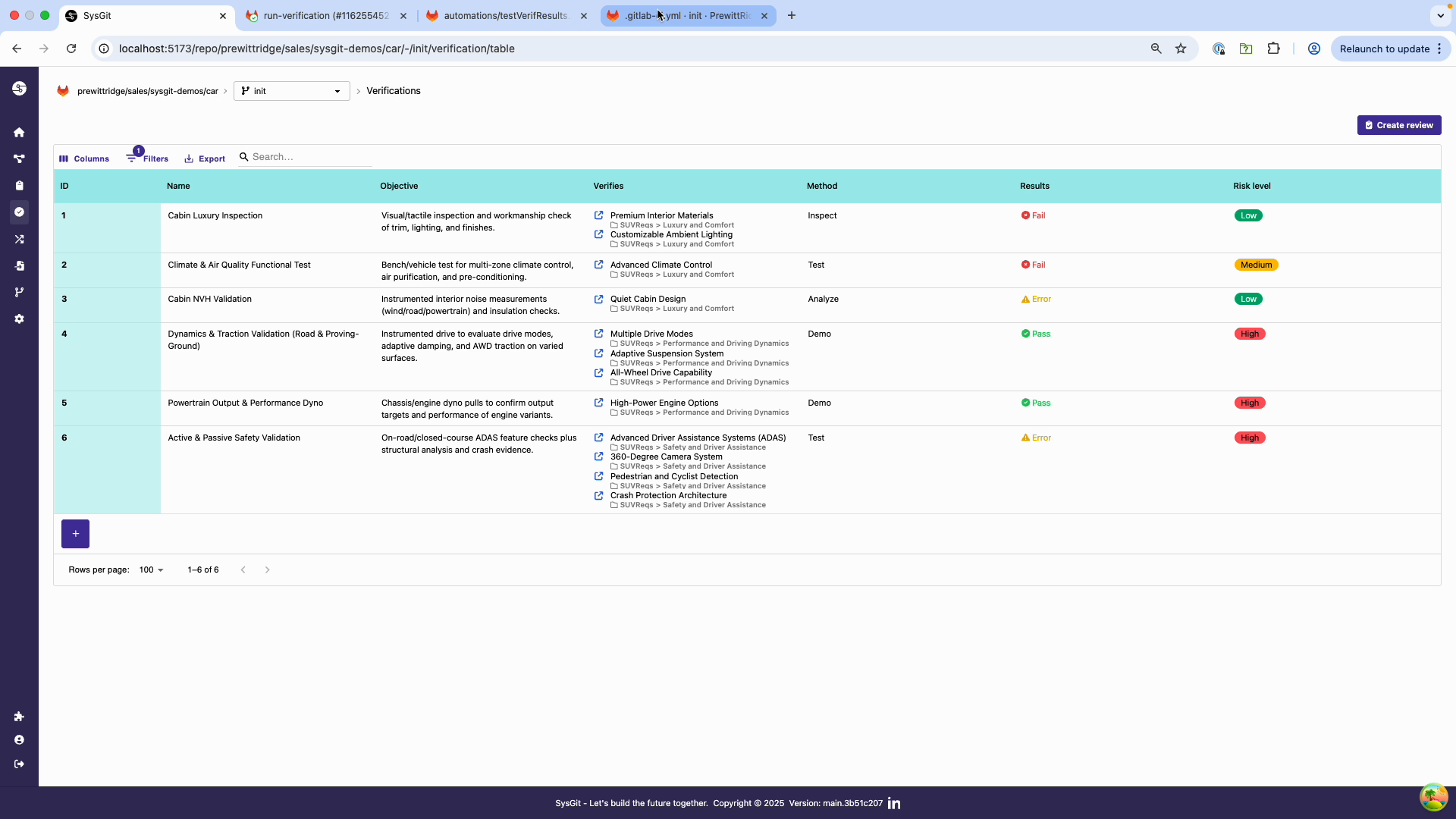

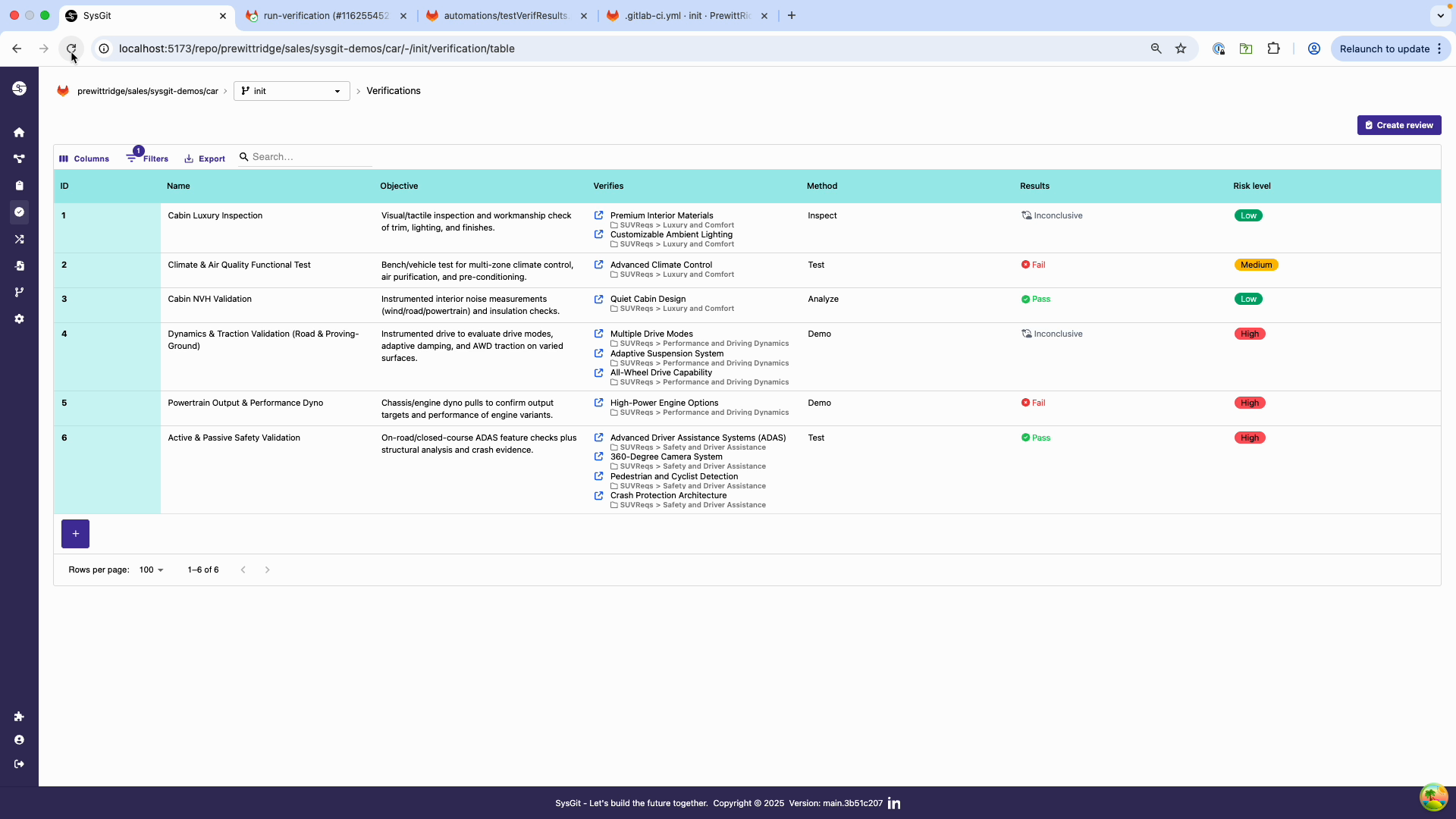

Verification verdicts automatically updated by CI/CD pipeline based on test execution

Verification verdicts automatically updated by CI/CD pipeline based on test execution

Linking to Test Systems#

Test Automation Frameworks#

SysGit can integrate with any test framework that produces machine-readable results. Common integration patterns include:

Python Test Frameworks#

For pytest, unittest, or custom Python test frameworks:

import sysgit as sg

import json

# Load test results from pytest JSON report

with open('test_results.json', 'r') as f:

test_results = json.load(f)

# Load SysML model

model = sg.read("requirements.sysml")

verifications = model.select(sg.VerificationCaseUsage)

# Map test results to verifications

for v in verifications:

test_name = v.qualified_name.split("::")[-1].strip("'")

if test_name in test_results:

result = test_results[test_name]

if result['status'] == 'PASSED':

v["verdict"].value = "VerdictKind::pass"

elif result['status'] == 'FAILED':

v["verdict"].value = "VerdictKind::fail"

else:

v["verdict"].value = "VerdictKind::error"

# Write updated model

model.write("requirements.sysml", overwrite=True)

Hardware-in-the-Loop (HIL) Systems#

For HIL test systems that output structured data:

import sysgit as sg

import csv

# Read HIL test results from CSV

hil_results = {}

with open('hil_results.csv', 'r') as f:

reader = csv.DictReader(f)

for row in reader:

hil_results[row['test_case']] = {

'verdict': row['pass_fail'],

'timestamp': row['timestamp'],

'details': row['notes']

}

# Update verifications

model = sg.read("requirements.sysml")

verifications = model.select(sg.VerificationCaseUsage)

for v in verifications:

name = v.qualified_name.split("::")[-1].strip("'")

if name in hil_results:

verdict_map = {

'PASS': 'VerdictKind::pass',

'FAIL': 'VerdictKind::fail',

'INCONCLUSIVE': 'VerdictKind::inconclusive'

}

v["verdict"].value = verdict_map[hil_results[name]['verdict']]

model.write("requirements.sysml", overwrite=True)

Software Test Automation#

Integration with test management systems like TestRail or Jama Connect:

import sysgit as sg

import requests

# Fetch test results from TestRail API

response = requests.get(

'https://your-instance.testrail.com/api/v2/get_results_for_run/123',

auth=('username', 'api_key')

)

test_results = response.json()

# Update SysGit model

model = sg.read("requirements.sysml")

verifications = model.select(sg.VerificationCaseUsage)

# Map TestRail results to SysGit verdicts

status_map = {

1: 'VerdictKind::pass', # Passed

5: 'VerdictKind::fail', # Failed

2: 'VerdictKind::inconclusive', # Blocked

4: 'VerdictKind::inconclusive' # Retest

}

for v in verifications:

# Match by test case ID stored in verification metadata

if hasattr(v, 'metadata') and 'testrail_case_id' in v.metadata:

case_id = v.metadata['testrail_case_id']

for result in test_results:

if result['case_id'] == case_id:

v["verdict"].value = status_map[result['status_id']]

model.write("requirements.sysml", overwrite=True)

Results Ingestion#

SysGit can ingest test results from various formats:

JSON Results#

import sysgit as sg

import json

def load_json_results(results_file):

"""Load test results from JSON file."""

with open(results_file, 'r') as f:

return json.load(f)

def map_verdict(status):

"""Map test status to SysML VerdictKind."""

mapping = {

'PASSED': 'VerdictKind::pass',

'FAILED': 'VerdictKind::fail',

'ERROR': 'VerdictKind::error',

'SKIPPED': 'VerdictKind::inconclusive',

}

return mapping.get(status, 'VerdictKind::inconclusive')

# Main ingestion logic

model = sg.read("requirements.sysml")

results = load_json_results("test_results.json")

verifications = model.select(sg.VerificationCaseUsage)

for v in verifications:

name = v.qualified_name.split("::")[-1].strip("'")

if name in results:

v["verdict"].value = map_verdict(results[name]['status'])

model.write("requirements.sysml", overwrite=True)

XML Results (JUnit format)#

import sysgit as sg

import xml.etree.ElementTree as ET

def parse_junit_xml(xml_file):

"""Parse JUnit XML test results."""

tree = ET.parse(xml_file)

root = tree.getroot()

results = {}

for testcase in root.findall('.//testcase'):

name = testcase.get('name')

classname = testcase.get('classname')

if testcase.find('failure') is not None:

results[name] = 'VerdictKind::fail'

elif testcase.find('error') is not None:

results[name] = 'VerdictKind::error'

elif testcase.find('skipped') is not None:

results[name] = 'VerdictKind::inconclusive'

else:

results[name] = 'VerdictKind::pass'

return results

# Update model with JUnit results

model = sg.read("requirements.sysml")

results = parse_junit_xml("junit_results.xml")

verifications = model.select(sg.VerificationCaseUsage)

for v in verifications:

name = v.qualified_name.split("::")[-1].strip("'")

if name in results:

v["verdict"].value = results[name]

model.write("requirements.sysml", overwrite=True)

CSV Results#

import sysgit as sg

import csv

def load_csv_results(csv_file):

"""Load test results from CSV."""

results = {}

with open(csv_file, 'r') as f:

reader = csv.DictReader(f)

for row in reader:

results[row['test_name']] = {

'status': row['status'],

'timestamp': row['timestamp'],

'duration': row['duration']

}

return results

# Update model

model = sg.read("requirements.sysml")

results = load_csv_results("test_results.csv")

verdict_map = {

'pass': 'VerdictKind::pass',

'fail': 'VerdictKind::fail',

'error': 'VerdictKind::error'

}

verifications = model.select(sg.VerificationCaseUsage)

for v in verifications:

name = v.qualified_name.split("::")[-1].strip("'")

if name in results:

status = results[name]['status'].lower()

v["verdict"].value = verdict_map.get(status, 'VerdictKind::inconclusive')

model.write("requirements.sysml", overwrite=True)

Status Updates#

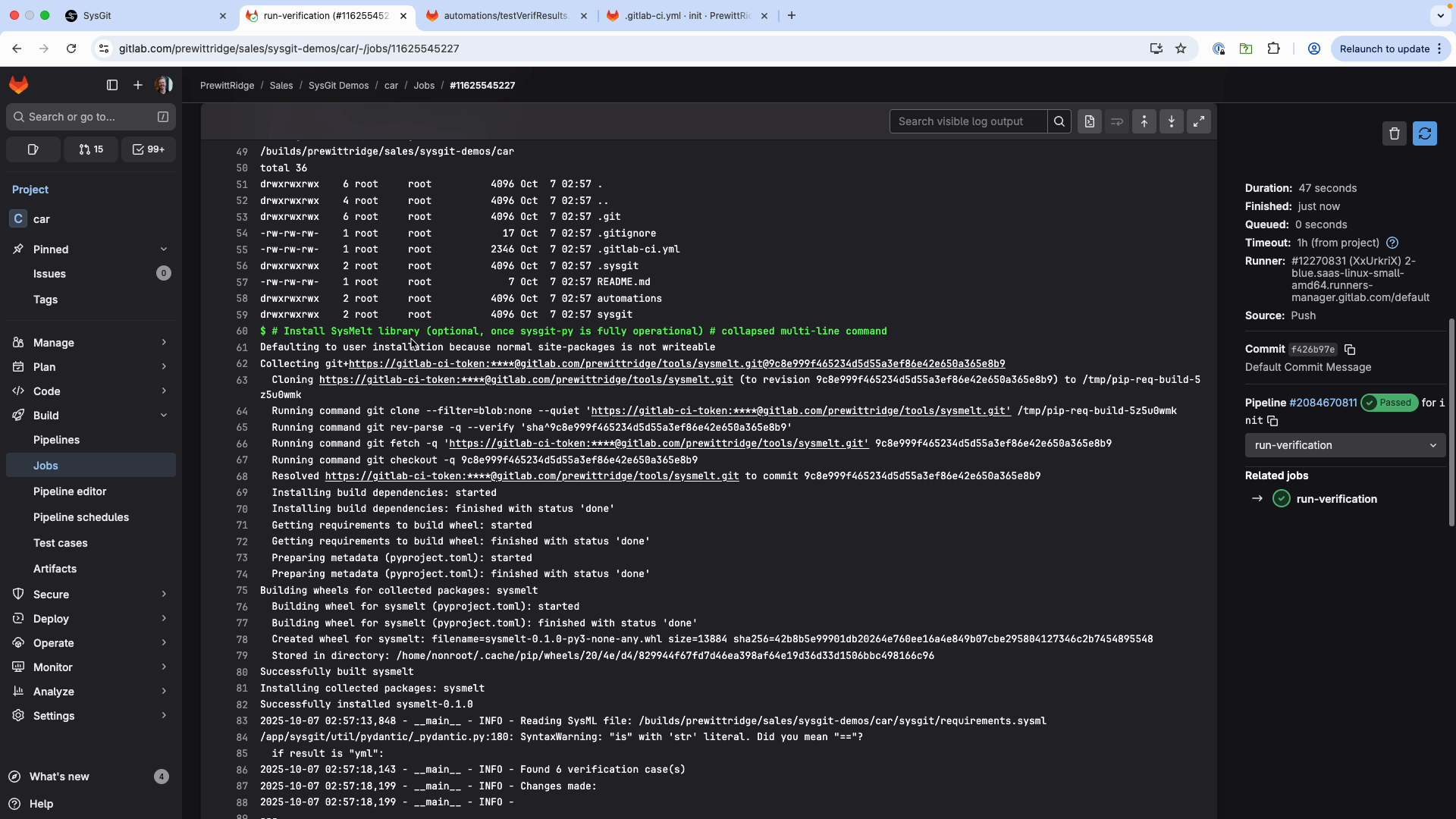

GitLab pipeline showing automated verification status updates

GitLab pipeline showing automated verification status updates

Real-Time Updates via CI/CD#

Configure GitLab CI/CD to run verification updates on every test execution:

stages:

- test

- update-verifications

run-tests:

stage: test

script:

- pytest tests/ --junit-xml=test_results.xml

artifacts:

paths:

- test_results.xml

update-verifications:

stage: update-verifications

needs: [run-tests]

image: registry.gitlab.com/prewittridge/tools/sysgit-py/sysgit-py:0.1.5-amd64

script:

- python3 automations/ingest_test_results.py test_results.xml

- |

git config user.name "GitLab Runner"

git config user.email "noreply@gitlab.com"

git add sysgit/requirements.sysml

git commit -m "chore: auto-update verification results [skip ci]"

git push origin HEAD:${CI_COMMIT_REF_NAME}

Batch Updates#

For batch processing of historical test results:

import sysgit as sg

import glob

import json

model = sg.read("requirements.sysml")

verifications = model.select(sg.VerificationCaseUsage)

# Process all test result files

all_results = {}

for results_file in glob.glob("test_results/*.json"):

with open(results_file, 'r') as f:

results = json.load(f)

all_results.update(results)

# Update verifications

for v in verifications:

name = v.qualified_name.split("::")[-1].strip("'")

if name in all_results:

v["verdict"].value = all_results[name]['verdict']

model.write("requirements.sysml", overwrite=True)

print(f"Updated {len(verifications)} verifications from {len(all_results)} test results")

Simulation Integration#

Connecting to Analysis Tools#

SysGit can integrate with simulation and analysis tools to automatically update verification status based on simulation results.

MATLAB/Simulink Integration#

import sysgit as sg

import scipy.io

# Load MATLAB simulation results

mat_data = scipy.io.loadmat('simulation_results.mat')

sim_results = mat_data['results'][0]

model = sg.read("requirements.sysml")

verifications = model.select(sg.VerificationCaseUsage)

# Evaluate simulation results against requirements

for v in verifications:

# Extract verification criteria from model

# (implementation depends on your model structure)

# Evaluate simulation data

if evaluate_criteria(sim_results, v):

v["verdict"].value = "VerdictKind::pass"

else:

v["verdict"].value = "VerdictKind::fail"

model.write("requirements.sysml", overwrite=True)

CAE/FEA Tool Integration#

For finite element analysis or computational fluid dynamics results:

import sysgit as sg

import numpy as np

def load_fea_results(results_file):

"""Load FEA results (e.g., from Nastran, ANSYS)."""

# Parse results file (format depends on tool)

return {

'max_stress': 450.0, # MPa

'max_displacement': 2.3, # mm

'safety_factor': 1.8

}

# Update structural verification cases

model = sg.read("requirements.sysml")

verifications = model.select(sg.VerificationCaseUsage)

fea_results = load_fea_results("structural_analysis.rst")

for v in verifications:

name = v.qualified_name.split("::")[-1].strip("'")

if 'Structural' in name:

# Check against stress limits

if fea_results['max_stress'] < 500.0: # Allowable stress

v["verdict"].value = "VerdictKind::pass"

else:

v["verdict"].value = "VerdictKind::fail"

model.write("requirements.sysml", overwrite=True)

Model-Based Systems Engineering (MBSE) Tool Integration#

Integration with tools like Cameo Systems Modeler or MagicDraw:

import sysgit as sg

import json

# Export simulation results from Cameo/MagicDraw

# (typically via custom plugins or APIs)

def load_mbse_simulation(results_file):

"""Load parametric simulation results from MBSE tool."""

with open(results_file, 'r') as f:

return json.load(f)

model = sg.read("requirements.sysml")

verifications = model.select(sg.VerificationCaseUsage)

sim_results = load_mbse_simulation("parametric_sim.json")

for v in verifications:

# Match verification to simulation by name or ID

# Evaluate simulation results against verification criteria

# Update verdict accordingly

pass

model.write("requirements.sysml", overwrite=True)

Verification via Simulation#

Trade Study Analysis#

Automatically verify requirements based on trade study results:

import sysgit as sg

import pandas as pd

# Load trade study results

trade_data = pd.read_csv('trade_study_results.csv')

model = sg.read("requirements.sysml")

verifications = model.select(sg.VerificationCaseUsage)

for v in verifications:

name = v.qualified_name.split("::")[-1].strip("'")

# Find corresponding trade study

if name in trade_data['verification_name'].values:

row = trade_data[trade_data['verification_name'] == name].iloc[0]

# Check if selected design meets requirements

if row['meets_requirement']:

v["verdict"].value = "VerdictKind::pass"

else:

v["verdict"].value = "VerdictKind::fail"

model.write("requirements.sysml", overwrite=True)

Monte Carlo Analysis#

Update verification status based on probabilistic analysis:

import sysgit as sg

import numpy as np

def monte_carlo_analysis(requirement_spec, num_iterations=10000):

"""Run Monte Carlo simulation to verify requirement."""

# Simulate with uncertainty distributions

results = []

for _ in range(num_iterations):

# Your simulation logic here

result = np.random.normal(100, 10) # Example

results.append(result)

# Calculate probability of meeting requirement

prob_success = np.mean(np.array(results) >= requirement_spec)

return prob_success

model = sg.read("requirements.sysml")

verifications = model.select(sg.VerificationCaseUsage)

for v in verifications:

# Extract requirement specification

# Run Monte Carlo analysis

prob = monte_carlo_analysis(requirement_spec=95.0)

# Update verdict based on confidence level

if prob >= 0.95: # 95% confidence

v["verdict"].value = "VerdictKind::pass"

elif prob >= 0.50:

v["verdict"].value = "VerdictKind::inconclusive"

else:

v["verdict"].value = "VerdictKind::fail"

model.write("requirements.sysml", overwrite=True)

Complete Example: Test System Integration#

Verification table before automated updates from test execution

Verification table before automated updates from test execution

Here's a complete example showing end-to-end integration with a test system:

import sysgit as sg

import json

import logging

import argparse

import sys

# Set up logging

logging.basicConfig(level=logging.INFO)

logger = logging.getLogger(__name__)

def parse_args():

"""Parse command line arguments."""

parser = argparse.ArgumentParser(

description='Update verification verdicts from test results.'

)

parser.add_argument('sysml_file', help='Path to SysML file')

parser.add_argument('test_results', help='Path to test results JSON')

parser.add_argument('-o', '--output', help='Output file path')

return parser.parse_args()

def load_test_results(results_file):

"""Load test results from JSON file."""

with open(results_file, 'r') as f:

return json.load(f)

def map_verdict(test_status):

"""Map test status to SysML VerdictKind."""

mapping = {

'PASSED': 'VerdictKind::pass',

'FAILED': 'VerdictKind::fail',

'ERROR': 'VerdictKind::error',

'SKIPPED': 'VerdictKind::inconclusive',

}

return mapping.get(test_status, 'VerdictKind::inconclusive')

def update_verifications(model, test_results):

"""Update verification verdicts based on test results."""

verifications = model.select(sg.VerificationCaseUsage)

updated_count = 0

for v in verifications:

# Extract verification name

name = v.qualified_name.split("::")[-1].strip("'")

# Check if test result exists

if name in test_results:

test_result = test_results[name]

verdict = map_verdict(test_result['status'])

v["verdict"].value = verdict

logger.info(f"Updated '{name}': {test_result['status']} -> {verdict}")

updated_count += 1

else:

logger.warning(f"No test result found for: {name}")

return updated_count

def main():

args = parse_args()

try:

# Load model

logger.info(f"Reading SysML file: {args.sysml_file}")

model = sg.read(args.sysml_file)

# Load test results

logger.info(f"Loading test results: {args.test_results}")

test_results = load_test_results(args.test_results)

# Update verifications

updated = update_verifications(model, test_results)

logger.info(f"Updated {updated} verification(s)")

# Save model

output_file = args.output if args.output else args.sysml_file

logger.info(f"Writing updated model: {output_file}")

model.write(output_file, overwrite=True)

logger.info("Done!")

return 0

except Exception as e:

logger.error(f"Error: {e}")

return 1

if __name__ == "__main__":

sys.exit(main())

Usage in GitLab CI/CD:

update-verifications:

stage: update-verifications

needs: [run-tests]

script:

- python3 automations/update_from_tests.py \

sysgit/requirements.sysml \

test_results.json

Best Practices#

Naming Conventions#

Maintain consistent naming between test cases and verification cases:

# Good: Direct name mapping

test_name = "Cabin_Luxury_Inspection"

verification_name = "Cabin Luxury Inspection"

# Use standardized formatting function

def normalize_name(name):

"""Convert between test and verification naming."""

return name.replace('_', ' ').title()

Error Handling#

Always validate test results before updating model:

def validate_test_results(results):

"""Ensure test results have required fields."""

required_fields = ['test_name', 'status', 'timestamp']

for test_name, test_data in results.items():

for field in required_fields:

if field not in test_data:

raise ValueError(f"Missing field '{field}' in test '{test_name}'")

return True

Audit Trail#

Log all updates for compliance and debugging:

import logging

from datetime import datetime

# Create detailed audit log

audit_log = []

for v in verifications:

old_verdict = v["verdict"].value

new_verdict = map_verdict(test_results[name]['status'])

if old_verdict != new_verdict:

audit_log.append({

'timestamp': datetime.now().isoformat(),

'verification': name,

'old_verdict': old_verdict,

'new_verdict': new_verdict,

'test_id': test_results[name]['test_id']

})

v["verdict"].value = new_verdict

# Save audit log

with open('verification_updates.log', 'a') as f:

json.dump(audit_log, f, indent=2)

Handling Partial Results#

Gracefully handle incomplete test data:

def update_with_partial_results(model, test_results):

"""Update only verifications with available test results."""

verifications = model.select(sg.VerificationCaseUsage)

updated = []

skipped = []

for v in verifications:

name = v.qualified_name.split("::")[-1].strip("'")

if name in test_results:

v["verdict"].value = map_verdict(test_results[name]['status'])

updated.append(name)

else:

skipped.append(name)

logger.info(f"Updated: {len(updated)}, Skipped: {len(skipped)}")

return updated, skipped

See Also#

- CI/CD Overview - General CI/CD concepts and architecture

- Custom Automation - Building custom automation scripts

- Verification Management - Manual verification workflows